- Automation

- Home

- /

- Learning Hub

- /

- Complete Guide to Web Performance Testing

- -

- December 14 2023

Complete Guide to Web Performance Testing

Web performance testing is the key testing process of websites and web apps under varying loads to find performance-related issues. This tutorial discusses web performance testing in detail, including its concept, significance, and how to execute it.

OVERVIEW

Web performance testing is a way of checking how well software applications perform under different conditions. Those conditions may be low bandwidth, high user traffic, etc. This testing method is specifically used to validate and evaluate the web performance, as any issue could lead to poor quality of the software applications. Here, web performance denotes various things like stability, scalability, speed, and responsiveness, all tested with different levels of traffic and load. But why is this test so important?

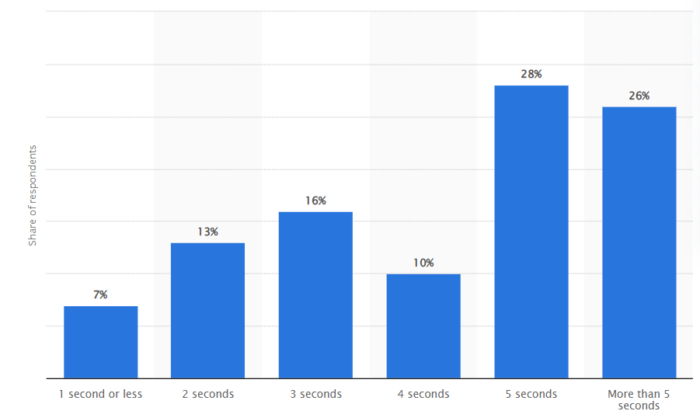

If we look at the Survey, 1 in 4 mobile users leave websites that take longer than 4 seconds to load. Further, Statista shows that only 26% of the users are willing to wait more than 5 seconds to load on their mobile devices. Hence, organizations are more focused on improving the performance of web apps and websites to meet high user expectations. This is where testers majorly rely on web performance testing.

However, in the beginning, simple static websites with minimal interactions were tested using server-side rendering. For this, testers mostly relied on manual and exploratory testing. However, things have changed with the arrival of interactive applications featuring dynamic content creation, DOM manipulation, jQuery, and tools like QTP (Unified Functional Testing). As web applications change, testing and monitoring solutions need to keep up. To this, web performance testing has become necessary in developing any complex or new web applications and websites as it evaluates how well they work in diverse conditions.

Despite the utility of a new complex software application, its performance is susceptible to factors like reliability, resource usage, and scalability. Introducing applications with inadequate performance metrics to the market can impact their reputation and impede sales goals. Web performance testing aims to identify and address potential bottlenecks that could affect the optimal performance of software applications.

This tutorial will discuss web performance testing in detail, highlighting its benefits, challenges, how to perform it, and other concepts. Such information will help you get started with web performance testing.

What is Web Performance Testing?

Web performance testing can be understood as the key testing process of websites and web apps under varying loads to find any performance-related issues. Those issues include network latency, inefficient database queries, server overloads, etc. Executing web performance testing aims to eliminate such performance bottlenecks that impact the overall user experience and efficiency of websites and web applications. It also allows testers to determine the software application's speed, scalability, and stability.

However, if we do not perform the web performance testing, it may show sluggish response time and poor interaction between the users and OS. Consequently, this results in poor user experience (UX). Performance testing for web applications is crucial for evaluating whether the developed software applications meet speed, responsiveness, and stability criteria when subjected to various workloads, ultimately ensuring a more positive UX.

Key performance indicators for web performance testing include:

- Browser, page, and network response times

- Server request processing times

- Acceptable concurrent user volumes

- Processor memory consumption

- Identification of error types and frequencies that may occur within the application

Organizations increasingly implement web performance testing to provide accurate insights into the preparedness of web applications. This involves testing the website and monitoring server-side applications. Web performance tests simulate loads similar to real conditions to evaluate whether the applications can handle the expected load. This approach helps the developers identify and resolve performance bottlenecks, enhancing overall system performance.

In summary, web performance testing:

- Ensures the application meets performance requirements

- Identifies computational bottlenecks within the application

- Validates the performance levels committed by the software vendor

- Evaluates the stability of the software/application under various concurrent user scenarios

The Objective of Web Performance Testing

Web performance testing aims to verify and ensure appropriate functionality and working of the web application and website in conditions like user traffic, diverse user interaction, and others. Here are some key objectives of web performance testing:

- Detecting software application’s bottleneck: The test process identifies and fixes any functionality of the applications that may lead to an impact on their overall performance. Some of the common issues include poor scalability, application crashes, etc.

- Ensure cross-platform stability: Web performance testing efficiently verifies the consistency of web applications across different platforms, browsers, and devices.

- Compliance with SLAs, Contracts, and Regulations: Validate that the web application meets the specified Service Level Agreements (SLAs), contractual obligations, and regulatory requirements, ensuring adherence to industry standards and legal guidelines.

- Prevention application degradation: Web performance testing checks that there is no decline in the performance of the web applications in case any major code change is made.

Now that you have learned about the crucial objective of web performance testing let us understand the key performance-related issues detected during the test process.

Common Issues with Performance

Performance-related challenges typically center around speed, response time, load time, and inadequate scalability. The speed of software applications is frequently a critical feature, as a sluggish software application risks losing potential users. The significance of speed is underscored by performance testing, which ensures that software applications operate swiftly enough to captivate and retain user attention. The subsequent list outlines prevalent performance concerns, highlighting the recurring theme of speed:

- Extended Load Time – Load time denotes the initial duration for a software application to initiate. Ideally, this duration should be minimized. While certain software applications may pose challenges in achieving sub-minute load times, efforts should be made to maintain load times within a few seconds if feasible.

- Inadequate Response Time – Response time measures the interval between a user entering data into the software application and the application providing a corresponding output. Swift response times are generally desirable, as prolonged waits may lead to user disinterest.

- Limited Scalability – Poor scalability manifests when a software application struggles to manage the expected user volume or fails to give to a broad range of users. Conducting load testing becomes imperative to confirm the software application's capacity to handle anticipated user numbers.

- Bottleneck Occurrences – Bottlenecks denote issues or errors within software applications that degrade overall performance. These occurrences arise when coding errors or hardware issues reduce throughput under specific loads. Identifying the code section causing the slowdown, which is often traced back to a single faulty code segment, is important in addressing bottleneck issues.

Fixation of bottlenecking typically involves rectifying poorly performing processes or introducing additional hardware. Common performance bottlenecks include CPU utilization, memory usage, network congestion, limitations imposed by the operating system, and disk usage.

Now, let us learn about the significance of web performance testing.

Significance of Web Performance Testing

Performance testing holds significant importance for various reasons, with prioritizing a world-class experience for the user being important. Therefore, we've compiled reasons highlighting the importance of maintaining your website's and web applications' performance.

- Impact web performance: Ensuring that your websites and web applications perform under load or stress is not just important because it directly influences sales. For instance, if your website or web application fails to load quickly or does not meet users' expectations, they will likely leave and seek what they need elsewhere. This results in the loss of potential users and revenue to a competitor. Hence, web performance testing is essential in ensuring seamless performance and functionality of the software applications under stress and load.

- Ensure continuous testing: Performance testing is not a single activity. Regular performance tests guarantee that your websites and web applications function normally, operate efficiently, and consistently enhance the overall user experience during high-traffic periods.

- Revenue protection through test: Any issues or bottlenecks identified during testing can be addressed continuously to avoid affecting actual visitors in the live environment. This instills confidence in internal business stakeholders that your websites and web applications can handle increased visitor traffic and spikes when launching the next significant promotion.

- Enhanced User Experience: According to Google, over half of all visitors abandon sites that take more than 3 seconds to load. The longer a website takes to load, the higher the chances of users leaving. Slow-loading sites test users' patience and create an unpleasant experience, making them less likely to stay or return, negatively impacting your business reputation. Conversely, a quick interaction with websites leads to instant fulfillment of user demands, creating a pleasant experience.

- Increased Conversions: Website speed and performance significantly influence conversion rates and consumer satisfaction. Longer user stays on your website increase the likelihood of conversion, such as filling out a registration form, making a purchase, or subscribing to a newsletter, directly improving business metrics.

- Performance Insight: Software testing helps identify the nature or location of software-related performance problems by highlighting potential areas of failure or lag. Organizations can leverage this testing to ensure readiness for predictable major events.

Need for Web Performance Testing

Performance testing is one of the most crucial software testing categories, yet numerous organizations neglect regular performance tests, whether due to a perceived lack of importance or potential budgetary constraints. Regardless of the rationale, organizations jeopardize substantial losses by omitting performance testing from the development cycle. As previously emphasized, the user experience can make or break a sale. Users will likely bounce if your website or web application fails to perform as intended. Once they bounce, regaining their attention becomes a challenge. Performance testing could have pinpointed bottlenecks before anything reached the live production environment.

For websites or applications expecting high traffic from visitors, customers, or internal users, omitting performance testing is risky. While your marketing and sales teams effectively promote, engage, and sell your company's services and products, neglecting to ensure your website or application operates optimally under load poses the risk of dissatisfying users. This dissatisfaction may result in losing any potential brand loyalty they initially had before arriving at your site. To avoid the risk of losing potential customers, it's imperative to invest time and resources in web performance testing.

When to Perform Web Performance Testing?

Executing performance tests becomes appropriate upon the completion of functional testing. An application's life cycle comprises two key phases for web and mobile applications: development and deployment. During testing, operational teams expose the application's components, such as web services, microservices, and APIs, to end users of the application architecture.

Development performance tests specifically target these components. The earlier these components undergo testing, the quicker any anomalies can be identified, resulting in lower rectification costs.

Developers have the option to compose performance tests, and they can also be integrated into the code review procedures. The scenarios for performance test cases are transferable across environments, from development teams conducting tests in a live environment to environments monitored by operations teams. Performance testing includes quantitative evaluation conducted in a laboratory or production environment.

However, as the application evolves, the scope of performance tests should expand. In certain instances, these tests may occur during deployment, mainly when replicating a production environment in the development lab proves challenging or expensive.

What is Measured in Web Performance Testing?

Utilizing web performance testing allows for evaluating various success factors, such as response times and potential errors. Having these performance measures, you can confidently pinpoint bottlenecks, bugs, and errors, enabling informed decisions on optimizing your software application to resolve the identified issues. In addition to this, the common issues measured by web performance tests are mainly related to speed, response times, load times, and scalability. Here are some of the other crucial measures in web performance testing:

- Load time: As discussed earlier, longer loading time is the major performance-related issue on the software application. It is defined as the total time the web page or web app takes to load in the user’s browser, be it a desktop or mobile device. With performance testing, measuring the total time taken for all resources, like HTML, CSS, JavaScript, images, etc, is possible.

- Response time: Web performance testing measures the time web applications take to respond to user requests. It includes time invested in processing the request on the servers, generating it, and transmitting it to the users.

- Page size: Page size refers to the total size of all resources (HTML, CSS, JavaScript, images, etc.) that must be downloaded to render a web page. Web performance testing ensures that a web page is small and loads faster.

- Time to First Byte (TTFB): TTFB is the time the browser takes to receive the first byte of the server's response after making a request. While performing web performance testing, you can consider the network latency, server processing time, and time spent generating the response.

Now that you have learned about web performance testing and its related concepts, it is equally important to know its different types that help address its aim.

Types of Web Performance Testing

Web performance testing includes several different types that work to evaluate the overall performance and working of the software applications. However, when you hear of web performance testing, you anticipate load and stress testing. Other different testing types fall under the category of web performance testing. Here are some of the common types of web performance testing.

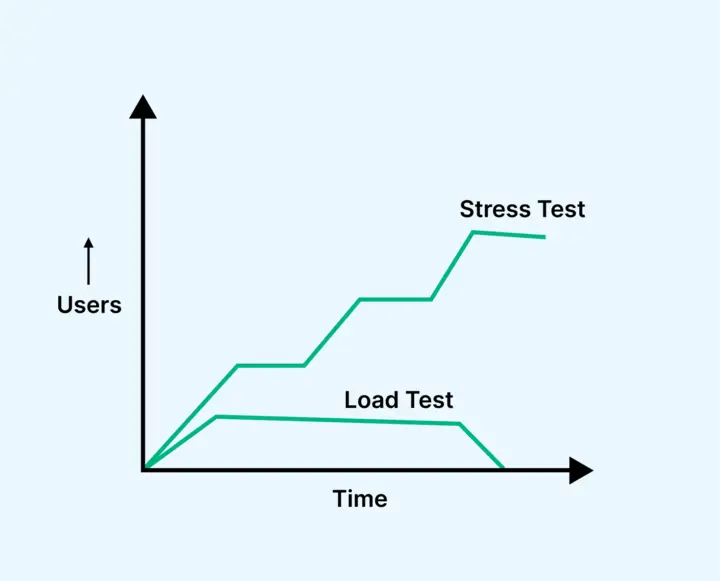

Load Testing

Load testing helps developers comprehend how software applications behave under a designated load value. Throughout the load testing procedure, an organization replicates the anticipated number of concurrent users and transactions over a specified timeframe to validate projected response times and pinpoint bottlenecks. This testing variant assists developers in measuring the application's user-handling capacity before its official launch. It aims to manage the load of the software applications within specific performance thresholds.

Moreover, developers can subject specific functionalities of software applications, like a web page's checkout cart, to load testing. Integrating load testing into a continuous integration process enables a team to promptly verify changes to a codebase using automation tools like Jenkins.

Stress Testing

Stress testing, also called fatigue testing, evaluates the software application's performance under abnormal user conditions. It validates how the software application responds beyond typical load scenarios and identifies the first failure components. The primary objective is to determine the software's breaking point and when it malfunctions.

Furthermore, stress testing verifies the software application's proficiency in error management during challenging conditions. This evaluation measures the software application's data processing capabilities and responsiveness to high traffic volumes.

The significance of stress testing is underscored by several factors:

- Verification of the software application's functionality under abnormal conditions.

- Ensuring the display of appropriate error messages when the software application is under stress.

- Recognizing that software application failure under extreme conditions can lead to substantial revenue loss.

- Emphasizing the importance of preparedness for extreme conditions through the execution of Stress Testing.

While executing stress testing, there may be instances where massive data sets are employed, and there is a risk of data loss. Testers must prioritize preserving security-related data during stress testing to mitigate potential risks.

Stress testing includes two categories that allow software testers to better understand the workload's scalability.

- Soak Testing

- Spike Testing

Soak testing, also known as endurance testing, imitates a gradual escalation of end users over time to evaluate a system's long-term viability. Throughout the test, the test engineer closely observes key performance indicators (KPIs), such as memory usage, and measures potential failures, including instances of memory shortages. Soak tests verify throughput and response times following sustained usage to ascertain whether these metrics remain consistent with their initial status.

Spike testing, a subset of stress testing, appraises software application’s performance when subjected to a sudden and substantial surge in simulated end users. These tests help determine the software application’s ability to repeatedly manage a rapid and pronounced increase in workload over a brief period. Comparable to stress tests, IT teams typically execute spike tests before significant events where the software application is anticipated to encounter higher-than-normal traffic volumes.z

Capacity Testing

A capacity test measures the software application's ability to accommodate a specific number of concurrent users before experiencing a breakdown.

Capacity testing shares similarities with stress testing, as it evaluates traffic loads based on user numbers but diverges in the quantity evaluated. Typically linked to capacity planning, this test determines the software application's architecture, which includes different factors like server quantity, memory capacity, and server speed, aligned with business requirements.

A "capacity test" pertains to the maximum number of users an architecture can handle under regular operational circumstances. Resembling load tests, which often focus on more targeted user activities, a capacity test seeks to establish the overall user capacity that software applications can handle.

Scalability Testing

Scalability testing considers whether the software applications can effectively manage increasing loads consistently. In simpler terms, it evaluates performance by measuring the software application's capability to scale performance up and down. For instance, testers might perform a scalability test by gradually increasing the number of user requests. This involves systematically augmenting the load volume while observing the software application's response. Alternatively, the load can be maintained constantly while exploring variations in factors such as memory, bandwidth, and more.

Scalability testing is divided into two types:

- Upward scalability testing

- Downward scalability testing

In this testing type, the number of users is increased on a specific scale until testers reach the software applications' crash point. It is one to find the maximum capacity of the software application.

This testing type is performed when load testing is not passed. Testers test the application by decreasing the number of software application users in a specific interval until optimum performance is reached.

Volume Testing

Volume testing, or flood testing, is a form of software testing designed to evaluate a software application's performance with a specified quantity of data. This quantity can pertain to the size of a database or the dimensions of an interface file being analyzed in the volume testing process. To execute volume tests, a sample file size is generated by including either a modest dataset or a more extensive volume, and the application's functionality and performance are subsequently tested against this file size. The primary objective of volume testing is to analyze how the software application’s response time and behavior are affected when the volume of data within the database is escalated.

Difference Between Performance and Functional Testing

In the above section, you have understood different types of web performance testing. However, you might consider performance testing and functional testing to be quite similar when it comes to verification of the performance of the software application. It is crucial to note that performance and functional testing are not the same; there are subtle differences, but they are important at the same time.

Here are some of the key differences for better understanding the concept of web performance tests.

| Performance Testing | Functional Testing |

|---|---|

| Verifies the software's 'performance' | Validates the software's 'behavior' |

| Focuses on meeting user expectations | Concentrates on fulfilling user requirements |

| Requires automation for comprehensive coverage | Can be executed through automated or manual methods |

| Involves customer, tester, developer, DBA, and N/W Management team | Involves customer, tester, and developer only |

| Demands a close production test environment & H/W facility to simulate load | Production-sized test environments not mandatory; H/W requirements minimal in Functional testing |

Performance Testing Example

To understand performance testing better, let us take a specific example of web performance testing using Apache JMeter, focusing on scenarios with increasing user loads.

Scenario: Testing the Upward Scalability of an eCommerce Website

Objective: Evaluate the behavior of an eCommerce website under increasing user loads. Scenarios:

Desired Load: 1000 Users

- Goal Time: 2.7 seconds per request.

- Result: Pass

- Explanation: The test is successful since the actual load (1000 users) equals the desired load, and the response time meets the goal time of 2.7 seconds.

Increased Load: 1100 Users

- Goal Time: 3.5 seconds per request.

- Result: Pass (Stress Testing)

- Explanation: The test passes as the actual load (1100 users) exceeds the desired load (1000). This scenario is considered stress testing, and the system is expected to handle the increased load with a slightly higher response time of 3.5 seconds.

Further Increased Load: 1200 Users

- Goal Time: 3.5 seconds per request.

- Result: Fail

- Explanation: The test fails because the actual load (1200 users) is not less than or equal to the desired load (1000). This indicates that the system cannot handle the increased load, and the response time is higher than the specified goal time.

Even Higher Load: 1300 Users

- Goal Time: 4 seconds per request.

- Result: Fail

- Explanation: The test fails again as the actual load (1300 users) exceeds the desired load, and the response time (4 seconds) exceeds the specified goal time.

Maximum Load: 1400 Users

- Result: Crashed

- Explanation: The system crashes when the load reaches 1400 users, indicating that the application cannot handle this level of concurrent users, leading to a complete failure.

From the above example, it is understood that by executing the web performance testing, you can easily identify the capacity of the software applications to manage and withstand the loads. With this test, it also becomes possible to test the software applications to understand how they function under extreme test scenarios.

Web Performance Test Cases Example

Some web performance test cases are given below that will help you understand the key concepts of the actual test process.

- Test Scenario 01: Confirm that the website's response time does not exceed 4 seconds when 1000 users access it simultaneously.

- Test Scenario 02: Ensure that the response time of the Application Under Load falls within an acceptable range, specifically when the network connectivity is slow.

- Test Scenario 03: Determine the maximum number of users the application can handle before experiencing a crash.

- Test Scenario 04: Evaluate the execution time of the database when handling simultaneous read/write operations on 500 records.

- Test Scenario 05: Verify the CPU and memory usage of the application and the database server under peak load conditions.

- Test Scenario 06: Validate the response time of the application under varying load conditions, including low, normal, moderate, and heavy loads.

During the execution of performance tests, ambiguous terms such as "acceptable range" and "heavy load" are replaced with specific numerical values. Performance engineers establish these values based on business requirements and the technical characteristics of the application.

Web Performance Testing Approaches

When performing web performance testing, there are two major approaches: manual testing and automation testing. However, it is crucial to note that performance testing using a manual approach is impractical. Here are some key reasons:

- When executing the web performance testing, testers must mimic many virtual users using the application to simulate real-world usage scenarios and load. If this is done manually, there might be a need for a huge number of human testers to run simultaneous tests with the software application.

- To run a web performance test using a manual approach, there will be a need for a substantial number of resources like hardware, database, servers, and time, making this approach expensive.

- While performing the test, testers are supposed to measure the performance of software applications by evaluating response time, throughput, and resource utilization. When such metrics are tracked manually, there is a huge chance of error and inaccuracy that make the test fail.

- Performance testing needs to be repeatable to address changes and improvements accurately. Manual testing may not reproduce test scenarios consistently, leading to variations in results.

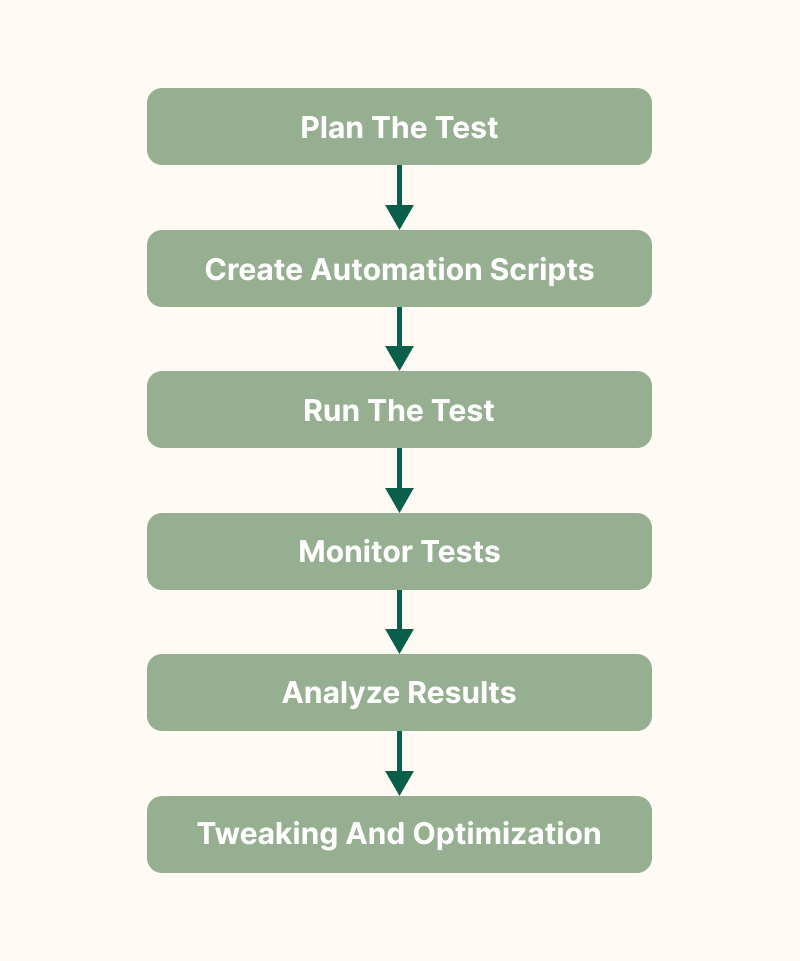

Need for Automation of Web Performance Testing

The goal of each performance tester is to prevent bottlenecks from emerging in the Agile development process. To avoid this, incorporating as much automation into the performance testing process as possible can help. To do so, it’s necessary to run tests automatically in the context of continuous integration and to automate design and maintenance tasks whenever possible.

In addition, the automation testing approach is highly preferred as it can mimic virtual users more economically, making it a practical choice among testers. It also gives more repeatable and correct measurements of the performance metrics. This ensures the reliability of the testing process. Further, it also allows for the creation of repeatable and consistent test scenarios, enabling testers to run the same tests multiple times under controlled conditions.

It is important to note that complete performance testing automation is possible during component testing. However, the human intervention of performance engineers is still required to perform sophisticated tests on assembled applications. The future of performance testing lies in automating testing at all stages of the application lifecycle.

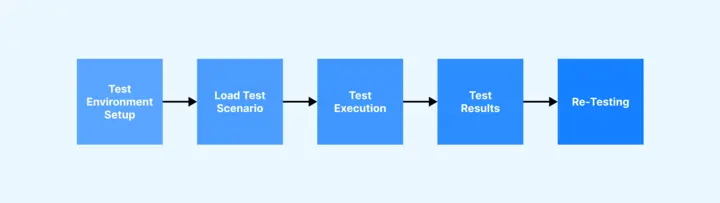

Steps to Perform Web Performance Testing

Now, let us learn the basic steps to execute web performance testing:

1. Identify Performance Scenario

Before initiating web performance testing, testers must understand the key scenarios affecting web application performance. For example, you can consider scenarios like peak usage time, high traffic periods, and others that might be critical to the software application.

2. Plan and Design Performance Test Script

In the next step, testers must give time to planning and designing performance test scripts. The test scripts must simulate the identified scenario so that the performance of the software application can be accurately verified. The test scripts must have a series of actions and interactions that users perform on the websites. Tools like JMeter can be used to design test scripts and automate the test scenario

3. Configure the Test Environment and Distribute the Load

Then, you must begin the test by first identifying the test environment that correctly duplicates the intended production environment. To replicate your performance testing environment better, you must record the relevant specifications and configurations, including hardware and software. You must avoid conducting tests in live production environments unless safeguards are in place to avert disruptions to the user experience. The closer the resemblance between your test environment and the live production setup, the greater the precision of your test results.

Configuration management is imperative for programming the environment with pertinent variables prior to the execution of tests.

4. Define Acceptable Performance Levels

This includes performance objectives and limitations for metrics in this phase. Establish the objectives and numerical benchmarks to signify the triumph of performance tests. A main approach to achieve this is by referencing project specifications and the anticipated outcomes from the software. Subsequently, testers can identify test metrics, benchmarks, and thresholds to articulate acceptable system performance. This might include response time, throughput, and resource allocation criteria. Additionally, you have the flexibility to incorporate other metrics tailored to the specifics of your application.

5. Create Test Scenarios

Once the performance metrics have been identified, the subsequent task involves formulating authentic test scenarios. These scenarios aim to replicate the practical usage of the software application, essentially mirroring the actions anticipated by end users. This includes simulating activities that users are expected to engage in while interacting with your application commonly. Test scenarios can be created to have diverse user interactions like registration, login, browsing, and purchasing. These scenarios must be designed to have all facets of the software application's functionality. Essentially, they should accurately mirror authentic user behavior and the projected load on your software applications.

6. Choose the Right Performance Testing Tools

Selecting the appropriate performance testing tools is paramount in guaranteeing precise and dependable test outcomes. Various performance testing tools, including Apache JMeter, LoadRunner, and Gatling, are accessible in the market. Given their distinct features and capabilities, opting for the tool that aligns most effectively with your specific requirements becomes crucial.

7. Execute the Web Performance Test

Once the test environment is established and the appropriate testing tool is selected, the commencement of performance tests becomes viable. To ensure consistency in results, it is imperative to conduct tests repeatedly. Vigilant monitoring of the software application throughout test execution is crucial for capturing any potential anomalies. For web applications, a convenient checklist can streamline the test execution process and save time.

You must also consider adopting parallel testing to concurrently run tests, optimizing time without compromising result accuracy. The following practices are recommended during the execution of performance tests:

- Designate an individual responsible for continuous monitoring.

- Establish a routine of regularly validating test data, systems, and scripts.

- Monitor all test results carefully to identify and rectify any process flaws.

- Maintain comprehensive logs of all tests, facilitating potential reiteration during the application life cycle.

- Initiate smoke tests as a preliminary step before delving into the actual tests.

- As a general practice, QA professionals typically execute each test three times.

8. Result Analysis

Evaluate the performance test data accumulated throughout ongoing testing to pinpoint potential system performance issues or bottlenecks. A thorough analysis has the potential to offer actionable insights for enhancing the application.

9. Identify the Bottleneck

Following the above step, you can find the issue or bottleneck compromising the performance of the software applications. The common bottleneck could be code problems, hardware issues, software glitches, and network issues. When analyzing the result and getting the bottleneck, fixing and resolving the issues is crucial.

10. Debugging and Re-Testing

Upon receiving the test results and identifying bugs, share this information with the team. Compile the bugs and forward them to the relevant developers for resolution. If feasible, QA professionals can address some basic bugs to expedite the process and minimize back-and-forth within the team.

After addressing performance shortcomings, re-execute the test to confirm the cleanliness of the code and optimal performance of the software.

11. Continuous Monitoring

Maintain vigilance over your application's performance post-launch. Regular performance testing is an early detection mechanism for new issues, preventing their impact on users. Additionally, it ensures that your application adapts to evolving usage patterns and loads.

Web Performance Testing in the Cloud

From the steps mentioned above, you must ascertain the significance of replicating the production environment to perform performance testing. For this, it is important to have the correct configuration of the test environment. However, sometimes it becomes difficult to scale resources up or down, the organization may face resource constraints, and it may require upfront capital investment in hardware, servers, and networking equipment.

Opting for a cloud-based environment and testing in real user conditions is recommended. Cloud platforms provide scalable resources, allowing you to easily adjust the size and capacity of your test environment based on the testing requirements. It also offers rapid provisioning and deployment of resources.

Further, the easiest approach to executing tests in real user conditions involves running performance tests on actual browsers and devices. Instead of managing the numerous limitations of emulators and simulators, testers find greater efficacy in utilizing a real device cloud that provides real devices, browsers, and operating systems on demand for immediate testing.

Subscribe to the LambdaTest YouTube Channel for test automation tutorials around Selenium, Playwright, Appium, and more.

QA professionals can ensure consistent and accurate results by executing tests on a real device cloud. Thorough and flawless testing is important in preventing significant bugs from slipping through undetected into production, optimizing the software to deliver the highest levels of user experience.

Whether opting for manual testing or automated testing, the indispensability of real devices remains non-negotiable in the testing equation.

Checklist for Web Performance Testing

To have a better and more robust execution of web performance testing without missing any crucial step, having a checklist is always preferable. Below is an expanded checklist covering various aspects of web performance testing:

- Check for performance goals of the software applications like high throughput.

- Check critical user scenarios and usage patterns.

- Determine the peak load and simultaneous user connections.

- Check for SLAs related to performance and ensure it best aligns with user expectations.

- Validate performance against user expectations.

- Ensure that hardware infrastructure aligns with performance requirements.

- Ensure the software stack (web server, application server, database) is optimized.

- Check that code review is done to find performance bottlenecks.

- Regularly monitor and analyze performance metrics.

- Check whether security evaluation is done to ensure performance under secure conditions.

Using a checklist covering these aspects, you can systematically approach the web performance testing process and ensure your software application is robust, scalable, and meets user expectations.

Performance Testing Metrics

Various performance metrics, also known as key performance indicators (KPIs), play a crucial role in enabling organizations to verify their current performance.

Typical performance metrics include the following:

- Throughput: The quantity of data units a system processes within a specified time.

- Memory: The operational storage capacity accessible to a processor or workload.

- Response time, or latency: The duration between a user's inputted request and the commencement of the system's response.

- Bandwidth: The data volume per second that can traverse between workloads, typically across a network.

- Central Processing Unit (CPU) interrupts per second: The frequency of hardware interrupts a process receives each second.

- Average latency: Also known as wait time, it gauges the time to receive the initial byte after sending a request.

- Average load time: The mean duration for each delivery request.

- Peak response time: The maximum time frame required to fulfill a request.

- Session amounts: The maximum count of active sessions that can be concurrently open.

Leveraging these metrics and others empowers organizations to conduct various performance tests.

Web Performance Testing Reports

While we've extensively covered various aspects of web performance testing, one crucial aspect we haven't discussed is performance testing reports. These reports, along with dashboards and metrics, serve as the foundation for understanding the existence of bottlenecks and identifying necessary system enhancements for optimizing your websites, applications, and APIs.

Although the specifics of reports may differ among various performance testing solutions, there are fundamental performance metrics (load time, response time, etc) that demand inspection and testing across all reports. Most tools (Apache JMeter, LoadRunner) also facilitate easy sharing of these reports, allowing feedback collection from diverse departments.

Effective planning of objectives and accurate execution of web performance tests simplify identifying issues in the performance reports. Conversely, inadequate planning may lead to confusion, necessitating a revisit to the initial stages to reevaluate performance tests. Unlike functional tests, where outcomes are clear as pass or fail, performance testing is more intricate, demanding additional analysis.

A significant visual created by performance testing tools like Google Chrome DevTools and WebPageTest is the waterfall chart, containing information and data for analysis. Load time is a critical performance metric, considering that slow page load times significantly increase the likelihood of visitors abandoning your pages. Web performance reports, aligned with your requirements plan, showcase load times, response times, and individual component load and response times, providing insights into compliance with specified thresholds.

In addition to the waterfall chart, which details load times, response times, and file sizes, users are typically presented with other metrics and dashboards revealing errors such as HTTP error codes or completion timeout errors due to prolonged element loading times. A complete investigation of these factors and metrics ensures adherence to specified performance thresholds, preventing negative impacts on web performance.

Further visuals and reports include the performance testing execution plan, illustrating the impact of virtual user numbers on response times over the test period. Comparing this data with the waterfall chart helps find specific components hampering performance. Another crucial graphic is the cumulative session count report, revealing instances where new sessions couldn't start, resulting in errors.

While comprehending these reports and metrics may require some time, it's critical for sites or applications subject to SLA requirements. Implementing any necessary performance improvements ensures readiness to handle the expected load. Addressing immediate concerns is the initial step, yet it's crucial to recognize that performance testing is an ongoing process, not a one-time endeavor. With each modification to applications or systems, performance testing should be consistently conducted with each application or system modification to find undetected issues.

Tools to Execute Web Performance Testing

To leverage the execution of web performance testing, you can use some performance testing tools that address software technology needs. However, there are many different testing tools in the market; some are free, some are paid, and some are open-source. The main challenge you can encounter is choosing the right tool that best aligns with your software project requirements. Below is a list of some of the best web performance testing tools from which you can choose the correct tool.

JMeter

Regarded as one of the most potent tools for execution load and stress performance testing on web applications, JMeter allows the testers to emulate substantial traffic loads and understand the resilience of networks or servers. Supporting a diverse array of protocols such as Web, FTP, LDAP, TCP, Database (JDBC), and more, JMeter offers a comprehensive IDE that affords testers maximum control over the execution and monitoring of load tests.

Gatling

Gatling stands out as a load and performance testing framework, featuring an open-source nature complemented by an intuitive interface tailored for web applications. Gatling boasts compatibility with various protocols, including HTTP, Server-sent events, WebSockets, JMS, AMQP, MQTT, and ZeroMQ. With its HTML-rich reporting and integrated DSL, Gatling equips testers with various plugins, enabling them to enhance the software tool's functionality and optimize the testing framework.

LoadRunner

It is widely recognized as a robust performance testing tool, LoadRunner excels in supporting a broad spectrum of protocols for considering the performance of various websites and applications, including mobile platforms, rendering it highly adaptable. Although a complex tool necessitating on-premises deployment, MicroFocus offers a web-based alternative named LoadRunner Cloud for short-term requirements.

LoadRunner easily identifies prevalent causes of performance issues while providing accurate application scalability and capacity predictions.

WebLOAD

Developed by RadView, WebLOAD serves as load-testing software designed for executing performance tests on websites, web applications, and APIs. The platform supports various protocols, cloud applications, and enterprise applications, seamlessly integrating with numerous third-party tools such as Git and Atlassian Bamboo. It functions as a comprehensive tool for load testing, performance testing, and stress testing web applications, WebLOAD unifies performance, scalability, and integrity in a single process for the validation of web and mobile applications.

LoadNinja

LoadNinja, a SmartBear offering, changes the load testing process for websites, web apps, and APIs by leveraging real browsers to provide more accurate real-world results. This cloud-based tool enables teams to record and instantly play back comprehensive load tests effortlessly and use real browsers at scale without the complexities of dynamic correlation. LoadNinja includes a user-friendly point-and-click scripting tool called the InstaPlay recorder.

BlazeMeter

Built on JMeter, BlazeMeter is a load-testing solution that supports open-source Java-based software for functional and performance tests on web applications. This platform integrates with various third-party performance testing tools like Apache JMeter, Selenium, and Grinder, enabling teams to upload scripts from these platforms into BlazeMeter. Positioned as the only complete, continuous testing platform in the market, BlazeMeter offers testers advanced features such as mock services, synthetic test data, and comprehensive API testing and monitoring, which are scalable to many users.

NeoLoad

Developed by Neotys, NeoLoad is an on-premises performance testing tool designed for websites, applications, and APIs. While on-premises solutions include additional hardware capacity, specific system requirements, and ongoing maintenance responsibilities, NeoLoad supports many popular protocols, frameworks, web services, and applications.

Grafana k6

k6 by Grafana is an open-source load testing tool designed for performance testing, scalability testing, and stress testing of web applications. Developed with a developer-centric approach, k6 is known for its simplicity, flexibility, and the ability to execute tests with realistic scenarios. You can further scale the end-to-end testing of web applications by integrating Grafana k6 with cloud testing platforms like LambdaTest and get the flexibility to run your test on different OS and browser (Chromium) versions.

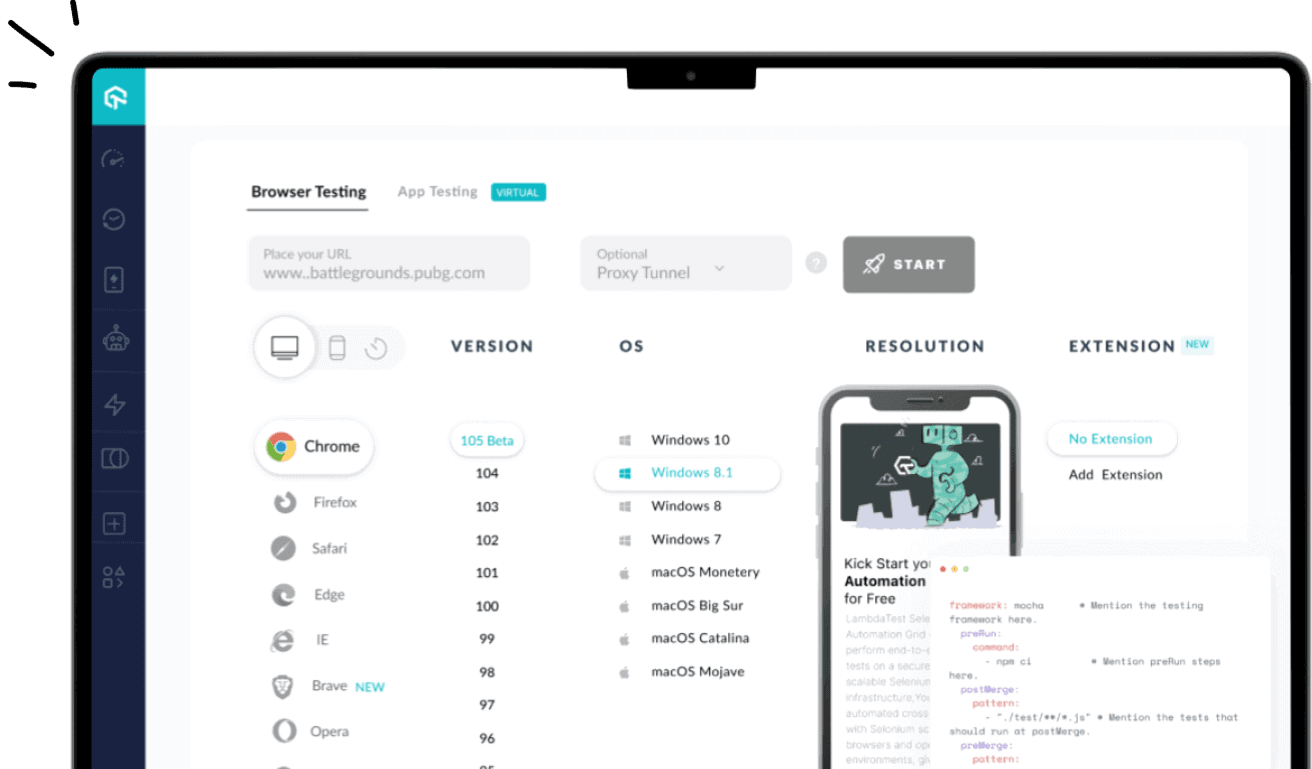

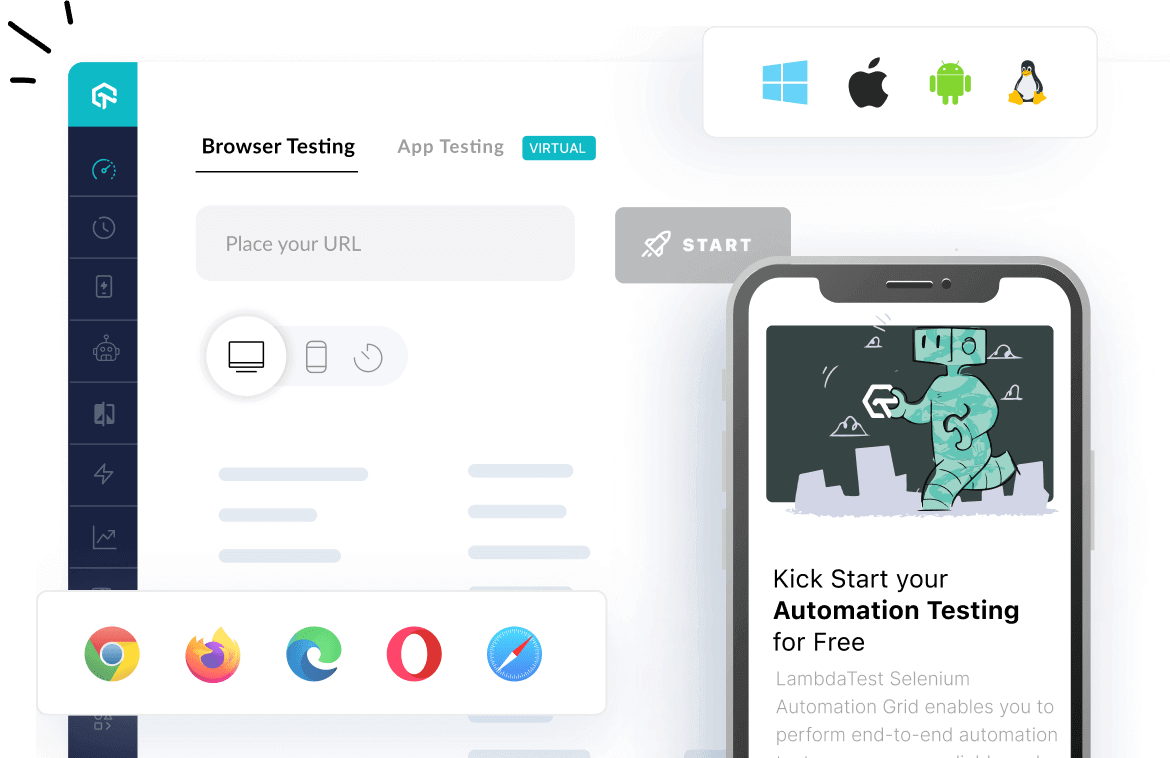

LambdaTest is an AI-powered test orchestration and execution platform to run manual and automated tests at scale. The platform allows you to perform real-time and automation testing across 3000+ environments and real mobile devices.

Visit our support documentation to get started with k6 testing with LambdaTest.

Note : Perform k6 testing on over 3000+ environments. Try LambdaTest Now!

Challenges of Web Performance Testing

Execution of web performance testing exists with challenges that must be known to every tester so that they can be addressed in the test process. Here are some of the certain challenges:

- Selection of Inappropriate Performance Testing Tools

- Lack of Comprehensive Test Strategy and Coverage

- Lack of Awareness about the Importance of Performance Tests

Encountering the challenge of choosing the right performance testing tool is a widespread issue, often resulting in the selection of less-than-ideal options. The decision on which tool to use depends on factors like the application's communication protocol, technology stack, the proficiency of the performance tester, and the associated licensing costs.

Opting for an ill-suited tool may lead to losing testing days as efforts are invested in getting the test scripts to function properly. It is imperative that the chosen performance tool accurately recognizes the controls of the application under test.

Solution:

To ensure the success of the testing process, the QA manager and the QA team must evaluate the Application Under Test (AUT), consider licensing costs, and then make an informed choice of the best performance testing tool.

Developing a thorough testing strategy that identifies and prioritizes software project risks and outlines mitigation actions requires significant effort. This process includes recognizing software application performance characteristics, planning tests to exercise these characteristics, simulating real user interactions, and testing API services to ensure they function as part of the overall test strategy. The absence of robust brainstorming during the creation of the test strategy and coverage hampers the effectiveness of performance test results.

Solution:

The performance team should invest substantial effort in analyzing and understanding application architecture and other performance characteristics, such as load distribution, usage models, geographical usage, availability requirements, resilience, reliability, and technology stack. A clear testing strategy must be developed to validate these performance characteristics and yield effective performance test results.

Many stakeholders and budget decision-makers fail to grasp the value of performance testing during software development. Often, performance issues surface post-production release, potentially causing the website, app, or software to crash.

Solution:

It is imperative for stakeholders, product owners, or test architects to include performance testing as an integral part of the end-to-end testing strategy. These applications should undergo performance testing, exercising web servers, databases, and third-party apps to ensure effective performance.

Best Practices of Web Performance Testing

Here are some of the best practices of web performance testing that will help you leverage its true capability of ensuring the quality of the software applications.

- Commence at the Unit Test Level:

- Identify Peak Traffic Times:

- Strive for Enhanced Test Coverage:

- Create Realistic Tests:

- Shift Left with Performance Tests:

- Select the Appropriate Test Automation Platform:

You should avoid delaying the execution of web performance tests until the code advances to the integration stage. Instead, incorporate the practice of running tests on code units before merging them into the main branch.

While performing the test, you must evaluate your software application's usage data to pinpoint instances of peak traffic. These high-traffic periods should be integrated into your performance testing scenarios.

Undoubtedly, depending on a single web performance testing scenario is insufficient. Comprehensive performance tests are essential to tackle issues that may emerge beyond accurately planned scenarios. The integration of an AI-enabled, codeless web performance testing tool expands the testing footprint, providing more robust scenario coverage and comprehensive test coverage.

In the execution of a web performance test, it is better to avoid the server cluster with thousands of users and label it as a performance test. While this may stress-test the software, it accomplishes little else. Consider the following: Real-world traffic originates from a diverse array of devices (both mobile and desktop), browsers, and operating systems. Testing must have this diversity. Utilizing a platform like LambdaTest facilitates this process. Invest time in research to identify the likely device-browser-OS combinations of the target audience and conduct tests on those devices using LambdaTest's real device cloud.

Web Performance testing should not be part of the final stages of the development process. Shifting left performance tests helps in early defect identification and solution implementation, resulting in improved time and cost optimization.

Ensure that the test automation platform mimics real user interactions with the software. This is crucial for achieving favorable outcomes in performance tests, particularly when there are alterations to performance test parameters.

Conclusion

In this tutorial, we have thoroughly discussed web performance testing, its concept, significance, and how to execute it. Let us summarize the key learning from this tutorial. Web performance testing is crucial in ensuring the quality and functionality of websites and web applications by determining their speed, scalability, and stability. It is an important part of the Software Testing Life Cycle because it allows the identification of bottlenecks and improves the software responsiveness. Organizations can ensure efficient, responsive, and reliable web platforms through this test.

Regarding web performance testing, the QA team performs load testing, stress testing, and others that simulate a real-world scenario, evaluate the application's performance in different conditions, and fix any performance-related issues. This test process cannot be overstated and is indispensable in any software development scenario. With this guide, you must gain immense information on web performance testing that will, in turn, help you get started.

On this page

- Overview

- What is Web Performance Testing?

- The Objective of Web Performance Testing

- Common Issues with Performance

- Significance of Web Performance Testing

- Need for Web Performance Testing

- When to Perform Web Performance Testing?

- What is Measured in Web Performance Testing?

- Types of Web Performance Testing

- Difference Between Performance and Functional Testing

- Performance Testing Example

- Web Performance Test Cases Example

- Web Performance Testing Approaches

- Steps to Perform Web Performance Testing

- Web Performance Testing in the Cloud

- Checklist for Web Performance Testing

- Performance Testing Metrics

- Web Performance Testing Reports

- Tools to Execute Web Performance Testing

- Challenges of Web Performance Testing

- Best Practices of Web Performance Testing

- Frequently Asked Questions (FAQs)

Frequently asked questions

- General

Author's Profile

Nazneen Ahmad

Nazneen Ahmad is an experienced technical writer with over five years of experience in the software development and testing field. As a freelancer, she has worked on various projects to create technical documentation, user manuals, training materials, and other SEO-optimized content in various domains, including IT, healthcare, finance, and education. You can also follow her on Twitter.

Reviewer's Profile

Shahzeb Hoda

Shahzeb currently holds the position of Senior Product Marketing Manager at LambdaTest and brings a wealth of experience spanning over a decade in Quality Engineering, Security, and E-Learning domains. Over the course of his 3-year tenure at LambdaTest, he actively contributes to the review process of blogs, learning hubs, and product updates. With a Master's degree (M.Tech) in Computer Science and a seasoned expert in the technology domain, he possesses extensive knowledge spanning diverse areas of web development and software testing, including automation testing, DevOps, continuous testing, and beyond.

Did you find this page helpful?

More Hubs

Try LambdaTest Now !!

Get 100 minutes of automation test minutes FREE!!

Christmas Deal is on: Save 25% off on select annual plans for 1st year.

Christmas Deal is on: Save 25% off on select annual plans for 1st year.