How To Do Parameterization In Pytest With Selenium?

Himanshu Sheth

Posted On: March 20, 2021

![]() 167031 Views

167031 Views

![]() 25 Min Read

25 Min Read

This article is a part of our Content Hub. For more in-depth resources, check out our content hub on Selenium Python Tutorial and Selenium pytest Tutorial.

Incorporating automated testing as a part of the testing accelerates the testing process so that issues can be identified & fixed faster. At the initial stages of product development, a small set of inputs are enough for unit testing and functional testing. However, the complexity of tests will increase as the development progresses, and a large set of input values are required to verify all the code’s functionality. Parameterized tests help best in such scenarios. In this Selenium Python tutorial, we will deep dive into parameterization in pytest – a software test framework.

Watch this in-depth pytest tutorial that will help you master the pytest framework and assist you in writing efficient and effective automation tests.

TABLE OF CONTENT

Parameterized Tests

Parameterized tests are an excellent way to define and execute multiple test cases/test suites where the only difference is the data being used for testing the code. Parameterized tests not only help in avoiding code duplication but also help in improving coverage/test coverage. Parameterization techniques in Python can be used instead of classic setup & teardown operations as those techniques aid in speeding up tasks that involve testing across different browsers, tasks involving database testing, etc.

Test parameterization in pytest is possible at several levels:

- pytest.fixture() to parametrize fixture functions.

- @pytest.mark.parametrize to define multiple sets of arguments and fixtures at the level of test functions or class.

- pytest_generate_tests() to define custom parameterization schemes or extensions.

In this pytest tutorial, learn how to use parameterization in pytest to write concise and maintainable test cases by running the same test code with multiple data sets.

Pytest – Fixtures (Usage and Implementation)

Consider a scenario where employee records that are stored in a database have to be extracted by executing a series of MySQL queries. If the employee database is small, record extraction will not take much time, but the situation will be different if the organization has a large number of employees.

Frequent access & repetitive implementation involving the database should be avoided, as these are CPU-intensive operations. This is where fixtures in pytest are instrumental in reducing the overhead involved in testing across large data sets.

The pytest framework has a much better way of handling fixtures when compared to the XUnit style of fixtures. Fixtures are a set of resources that are initialized/setup before the test starts and are cleaned upon completion of the tests. There are several advantages of using fixtures in pytest; some of the key benefits are below:

- Fixtures are implemented in a modular manner which makes it easy to implement them. They are easy to use and there is no learning curve involved.

- Fixtures can have lifetime and scope similar to variables in any programming language. Fixtures in pytest can have scope as class, module, functions, or the entire project.

- Fixtures that have function scope improve the readability of the test code. These fixtures reduce the effort involved in the maintainability of the test code.

- Fixture functions are reusable and can be used for simple unit testing or testing complex scenarios.

- Every fixture that is used in the test code has a name/identifier associated with it. Depending on the fixture’s scope, one fixture can call another fixture just like standard function calls.

Fixture functions use the Object-Oriented programming concept termed Dependency Injection, which allows the applications to inject objects on the fly to classes requiring them, without the classes being responsible for those objects. In the case of pytest, fixture functions are Injectors, and test functions are Consumers of those fixture functions.

The default fixture scope is a function. The scope can be changed to module, session, or class based on the test requirements. Scope of fixture indicates the number of times the fixture is created. Since Python 3.5, fixtures of scope session have a higher scope than fixtures of scope – function, class, and module.

Fixture Functions – Parameterizing Fixtures

Fixture functions can also be parameterized where those functions would be called multiple times, and each time, the dependent tests are executed. Parameters in fixtures are different from function arguments. Parameters can be list, tuples, sets, generators, etc.

Parameters are iterables and when pytest comes across an iterable, it converts the iterable into a list. The test functions need not be aware of the fixture parameterization. Using fixture parameterization, you can test your code’s functionality by writing exhaustive test cases with minimal code duplication.

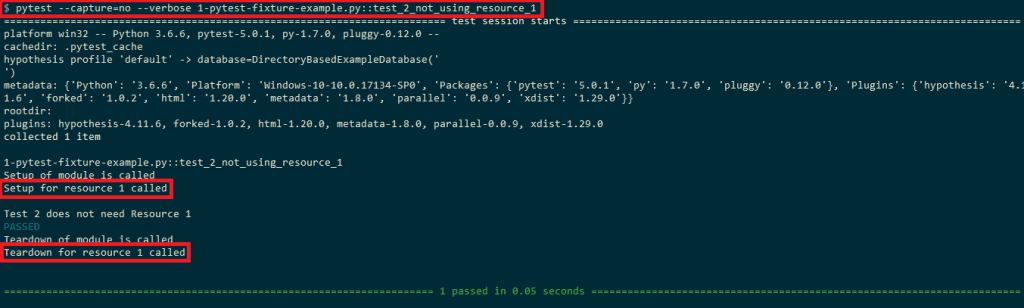

To demonstrate fixtures’ usage, we look at a simple example where the setup() and teardown() methods for resource 1 are called even when test_2 is executed. In case such a scenario involves database operations (read/write), it would cause significant overhead on the system on which the tests are executed.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 |

#Import all the necessary modules import pytest def resource_1_setup(): print('Setup for resource-1 called') def resource_1_teardown(): print('Teardown for resource-1 called') def setup_module(module): print('\nSetup of module is called') resource_1_setup() def teardown_module(module): print('\nTeardown of module is called') resource_1_teardown() def test_1_using_resource_1(): print('Test 1 that uses resource-1') def test_2_not_using_resource_1(): print('\nTest 2 does not need resource 1') |

The test function test_2_not_using_resource_1() is called using the following command

|

1 |

pytest --capture=no --verbose <file-name.py>::test_2_not_using_resource_1 |

As seen in the output below, the fixture functions for test_1 are called even when test_2 is executed.

This can be solved using fixtures by aligning the resource_1_setup() & resource_1_teardown() to XUnit style and assigning module scope to the fixture function.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 |

#Import all the necessary modules import pytest #Implement the fixture that has module scope @pytest.fixture(scope='module') def resource_1_setup(request): print('\nSetup for resource 1 called') def resource_1_teardown(): print('\nTeardown for resource 1 called') # An alternative option for executing teardown code is to make use of the addfinalizer method of the request-context # object to register finalization functions. # Source - https://docs.pytest.org/en/latest/fixture.html request.addfinalizer(resource_1_teardown) def test_1_using_resource_1(resource_1_setup): print('Test 1 uses resource 1') def test_2_not_using_resource_1(): print('\nTest 2 does not need Resource 1') |

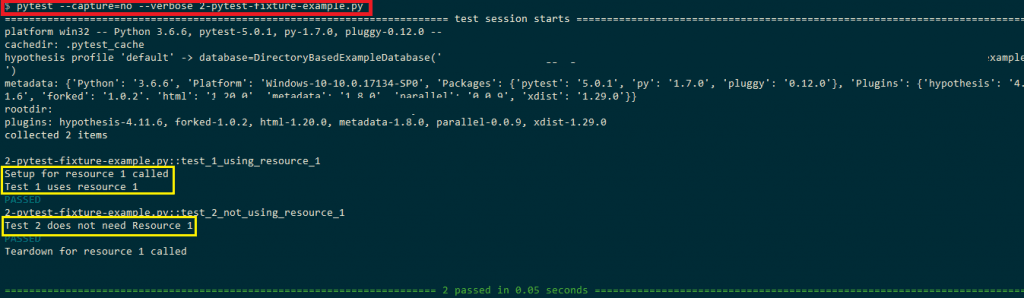

As seen in the output below, the fixture functions for test_1 are not called when test_2 is executed.

In this Selenium Python tutorial, we have looked into the scope and lifetime of fixture functions. Now, let us look at the usage of parameterized fixtures. When performing automated cross browser testing, some implementation might be common for browsers on which the test needs to be performed.

In such cases, parameterized fixtures with an appropriate scope can be used to avoid code duplication and making the code more portable & maintainable.

In the example below, Chrome & Firefox are used as parameters to the fixture functions with the scope as class i.e. @pytest.fixture(params=[“chrome”, “firefox”],scope=”class”)

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 |

# Import the 'modules' that are required for execution import pytest import pytest_html from selenium import webdriver from selenium.webdriver.chrome.options import Options from selenium.webdriver.common.keys import Keys from time import sleep @pytest.fixture(params=["chrome", "firefox", "MicrosoftEdge"],scope="class") def driver_init(request): if request.param == "chrome": web_driver = webdriver.Chrome() if request.param == "firefox": web_driver = webdriver.Firefox() if request.param == "MicrosoftEdge": web_driver = webdriver.Edge(executable_path=r'C:\EdgeDriver\MicrosoftWebDriver.exe') request.cls.driver = web_driver yield web_driver.close() @pytest.mark.usefixtures("driver_init") class BasicTest: pass class Test_URL(BasicTest): def test_open_url(self): self.driver.get("https://www.lambdatest.com/") print(self.driver.title) sleep(5) |

The fixture function gets access to each parameter through the request object.

|

1 |

def driver_init(request): |

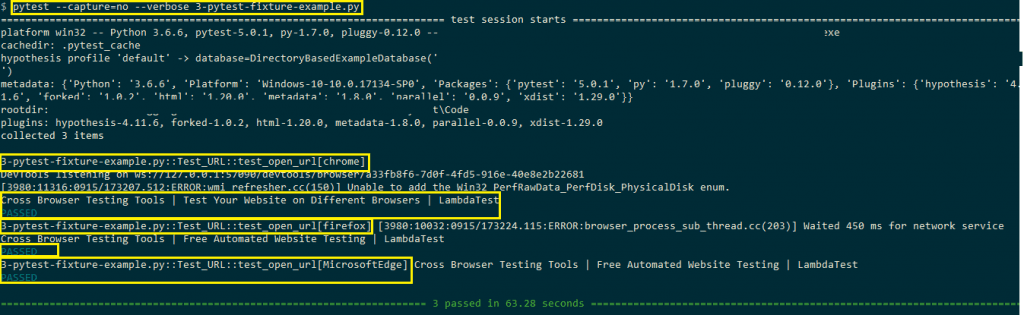

Three parameters with scope as a class are used for cross browser testing. The parameters are browsers on which testing has to be performed, i.e., Chrome, Firefox, and Microsoft Edge.

|

1 |

@pytest.fixture(params=["chrome", "firefox", "MicrosoftEdge"],scope="class") |

Depending on the input parameter, the corresponding webdriver is invoked, i.e., If the input parameter is chrome, the Chrome webdriver is set.

|

1 2 |

if request.param == "chrome": web_driver = webdriver.Chrome() |

Once the execution/testing is complete, the allocated resources on initialization have to be freed. The code after yield statement serves as the teardown code where the resources allocated for the webdriver are freed.

|

1 2 |

yield web_driver.close() |

Shown below is the output snapshot where the URL under test, i.e., LambdaTest homepage, is successfully opened on three browsers, i.e., Chrome, Firefox, and Microsoft Edge.

Sharing fixture functions using conftest.py

In case you have to share the fixture functions between different files, you can make use of conftest.py. The fixture functions that have to be shared should be moved to conftest.py. Import of the particular file/function is not required since it automatically gets discovered by pytest when it is defined in conftest.py.

Fixture functions are checked for presence in a hierarchical order, which is test classes, then test modules, then conftest.py files, and lastly, built-in & third-party plugins.

Sharing of test data

If there is a requirement to share data between different tests, you should load the data to be shared in fixtures. The data extraction using this approach can be faster since it uses automatic caching mechanisms of pytest.

There is also an option to add the data in the tests folder in the directory structure where test cases/test suites are located. Community plugins like pytest-datadir and pytest-datafiles can also be used as well.

@pytest.mark.parametrize: Parametrize test functions

Before we deep dive into the @pytest.mark.parametrize decorator, we have a look at an example where add/concatenation operation is done on different types of input values, e.g., integer, float, string, etc. Below is the implementation in pytest

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 |

# Import the 'modules' that are required for execution import pytest import pytest_html # A simple addition function that adds/concatenates two input values def add_operation(input_1, input_2): return input_1 + input_2 # Simple test functions to verify the add_operation function def test_add_integers(): result = add_operation(9, 6) assert result == 15 def test_add_strings(): result = add_operation('Cross browser testing on ', 'LambdaTest') assert result == 'Cross browser testing on LambdaTest' |

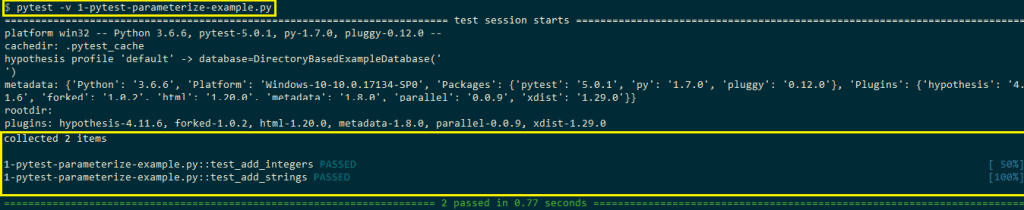

In the code, the test function add_function() is tested for integer and string input values. An assert is raised in case the test case fails. Below is the snapshot of the test execution

There is a lot of duplication of code, and the only thing that has changed in two test functions, i.e., test_add_integers() and test_add_strings(), is the type of input arguments. This can be avoided by making use of the @pytest.mark.parametrize decorator that enables parameterization of test functions. Below is the syntax of the pytest.mark.parametrize decorator

|

1 |

Metafunc.parametrize(argnames, argvalues, indirect=False, ids=None, scope=None) |

MetaFunc is an object used to inspect a test function and generate tests according to test configuration or values specified in the class or module where a test function is defined. @pytest.mark.parametrize can take different parameters as mentioned below:

Denotes the number of times the test is invoked with different argument values. Each pair of tuple-element specifies the value for its corresponding argname. |

|

We port the example code which was shown above using @pytest.mark.parametrize decorator.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 |

# Import the 'modules' that are required for execution import pytest import pytest_html # A simple addition function that adds/concatenates two input values def add_operation(input_1, input_2): return input_1 + input_2 # We make use of the parametrize decorator to supply input arguments # to the test function @pytest.mark.parametrize('inp_1, inp_2,result', [ (9, 6, 15), ('Cross browser testing on ', 'LambdaTest', 'Cross browser testing on LambdaTest') ] ) def test_add_integers_strings(inp_1, inp_2, result): result_1 = add_operation(inp_1, inp_2) assert result_1 == result |

The arguments in @pytest.mark.parametrize are passed as strings that are separated by commas (,).

|

1 |

@pytest.mark.parametrize('inp_1, inp_2,result', |

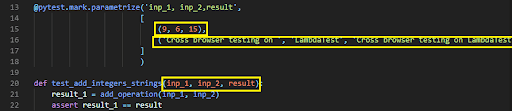

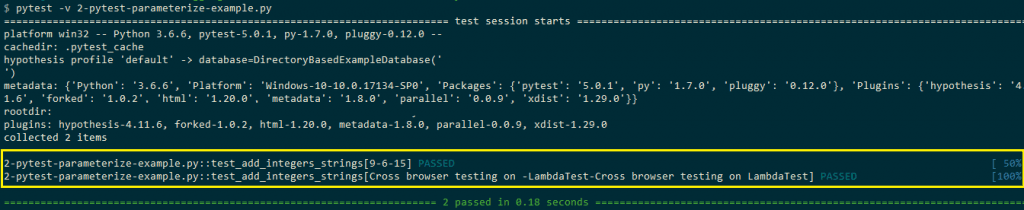

The argument names used in parametrize are used in the test function where testing is performed. The input values in parametrize decorator are passed iteratively to the test function. For example, on the first run, the input values (9, 6, 15) are assigned to input arguments of test_add_integers_strings test function i.e. inp_1 = 9, inp_2 = 6, result = 15.

As shown in the execution snapshot, the set of input values (in the form of tuples) are iterated after every test run until there are no input values in the input list.

When you are doing automated cross browser testing for your website/web application, you would need to test the functionality on various combinations of web browsers, operating systems, and devices. @pytest.mark.parametrize decorator can be used for enabling parameterization of test cases that are used for cross browser testing.

Shown below is the test code that uses @pytest.mark.parametrize decorator for passing input arguments (web-browser, URL) to the test function i.e. test_url_on_browsers(input_browser, input_url)

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 |

# Import the 'modules' that are required for execution import pytest import pytest_html from selenium import webdriver from selenium.webdriver.chrome.options import Options from selenium.webdriver.common.keys import Keys from time import sleep global url_under_test # We make use of the parametrize decorator to supply input arguments # to the test function @pytest.mark.parametrize('input_browser, input_url', [ ('chrome', 'http://www.lambdatest.com'), ('firefox', 'http://www.duckduckgo.com'), ('MicrosoftEdge', 'http://www.google.com'), ] ) def test_url_on_browsers(input_browser, input_url): if input_browser == "chrome": web_driver = webdriver.Chrome() if input_browser == "firefox": web_driver = webdriver.Firefox() if input_browser == "MicrosoftEdge": # The location where Microsoft Edge Driver (MicrosoftWebDriver.exe) is placed. web_driver = webdriver.Edge(executable_path=r'C:\EdgeDriver\MicrosoftWebDriver.exe') web_driver.maximize_window() web_driver.get(input_url) print(web_driver.title) sleep(5) web_driver.close() |

Depending on the input_browser, the corresponding webdriver is set i.e. Chrome webdriver is set if the input browser is Chrome.

|

1 2 3 4 5 6 7 8 |

def test_url_on_browsers(input_browser, input_url): if input_browser == "chrome": web_driver = webdriver.Chrome() if input_browser == "firefox": web_driver = webdriver.Firefox() if input_browser == "MicrosoftEdge": # The location where Microsoft Edge Driver (MicrosoftWebDriver.exe) is placed. web_driver = webdriver.Edge(executable_path=r'C:\EdgeDriver\MicrosoftWebDriver.exe') |

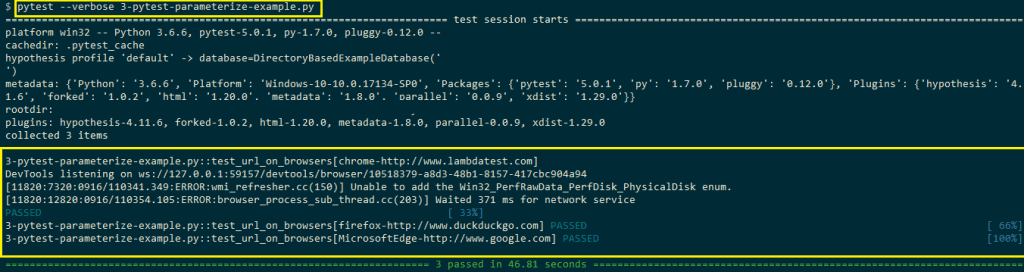

As seen in the output snapshot, the @parametrize decorator defines three different (input_browser, input_url) tuples so that the test_url_on_browsers() will run three times using them in turn.

Pytest APIs that can be used with Parameterization

During cross browser testing or Selenium automation testing, there would be cases where you would want to skip some test cases or want to test the functionalities by passing the wrong parameters. In failed scenarios, the result of the test is FAIL, which is the expected result, and you want the execution to proceed even after the failure of the test case execution.

This is where API’s of pytest are used with decorators in pytest, some of them are mentioned below:

1. pytest.fail – Fail an executing test case with a message to indicate the user about the status of test execution.

Syntax

|

1 |

fail(msg: str = '', pytrace: bool = True) |

Parameters

Example

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 |

import pytest # A simple addition function that adds/concatenates two input values def add_operation(input_1, input_2): return input_1 + input_2 @pytest.mark.parametrize('inp_1, inp_2,result', [ (9, 6, 15), (10, 6, 22) ] ) def test_add_integers_strings(inp_1, inp_2, result): result_1 = add_operation(inp_1, inp_2) if (result_1 != result): # Enable the stack trace for debugging the issue pytest.fail("Test failed", True) |

2. pytest.xfail – Intentionally mark a test case as xfailed with a valid reason. This option is handy when you have the test cases ready to test functionality that is not fully complete. Once the implementation of the functionality is complete, the test case can be removed from the xfail status.

Syntax

|

1 |

xfail(reason: str = '') |

Example

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 |

import pytest # A simple addition function that adds/concatenates two input values def add_operation(input_1, input_2): return input_1 + input_2 # We make use of the parametrize decorator to supply input arguments # to the test function @pytest.mark.parametrize('inp_1, inp_2,result', [ (9, 6, 15), pytest.param(10, 6, 22, marks=pytest.mark.xfail) ] ) def test_add_integers_strings(inp_1, inp_2, result): result_1 = add_operation(inp_1, inp_2) assert result_1 == result |

3. pytest.skip – Skip an already executing test with a message indicating the failure reason. This API can only be used during setup, call, or teardown process of testing or during collection by using the allow_module_level flag

Syntax

|

1 |

skip(msg[, allow_module_level=False]) |

Parameters

Example

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 |

import pytest # A simple addition function that adds/concatenates two input values def add_operation(input_1, input_2): return input_1 + input_2 # We make use of the parametrize decorator to supply input arguments # to the test function @pytest.mark.parametrize('inp_1, inp_2,result', [ (9, 6, 15), pytest.param(10, 6, 22, marks=pytest.mark.skip) ] ) def test_add_integers_strings(inp_1, inp_2, result): result_1 = add_operation(inp_1, inp_2) assert result_1 == result |

4. pytest.main – In-process test run is performed, and exit code is returned to the caller. This is the ideal way through which you can invoke pytest programmatically from the code.

Syntax

|

1 |

main(args=None, plugins=None) |

Parameters

Example

Shown below is a code snippet which shows some of the ways in which pytest.main can be used

|

1 2 3 4 5 6 7 |

import pytest ### Some pytest implementation before pytest.main is invoked ### # Invoking another pytest file pytest.main("test_multiplication.py") # Execute a shell command using pytest.main: pytest.main("-x some-dir") |

5. pytest.param – This API is used to specify parameters in pytest.mark.parametrize calls or parameterized fixtures.

Syntax

|

1 |

pytest.param(values, marks, id_in_str), |

Parameters

Example

Already covered as a part of examples that use pytest.xfail and pytest.skip.

6. pytest.exit – As the name specifies, this API is used to exit the testing process.

Syntax

|

1 |

exit(msg: str, returncode: [int] = None) |

Parameters

Example

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 |

import pytest # A simple addition function that adds/concatenates two input values def add_operation(input_1, input_2): return input_1 + input_2 # We make use of the parametrize decorator to supply input arguments # to the test function @pytest.mark.parametrize('inp_1, inp_2,result', [ (10, 6, 22), (9, 6, 15) ] ) def test_add_integers_strings(inp_1, inp_2, result): result_1 = add_operation(inp_1, inp_2) if (result_1 != result): # The first test fails hence the second test will not be executed at all pytest.exit("Test has failed", -1) |

7. pytest.raises – Raise a failure exception.

Syntax

|

1 |

with raises(expected_exception: Exception[, match]) as excinfo |

Parameters

Example

|

1 2 3 4 5 6 7 8 9 10 11 |

import pytest def zero_div_test(): return 20/0 def test_invocation_1(): with pytest.raises(ZeroDivisionError): zero_div_test() @pytest.mark.xfail(raises=ZeroDivisionError) def test_invocation_2(): zero_div_test() |

Apart from these APIs, there are many other helpful APIs like pytest.importorskip, pytest.deprecated_call, pytest_warns, etc.; however, covering all APIs is beyond the scope of the article. For in-depth information about pytest APIs, please have a look at the pytest API reference guide.

Stacking parametrize decorators

If you want to perform testing on different input combinations, you can stack parametrize decorators. Take an example where input values x = [5, 6] and y = [7, 8] are passed to the @pytest.mark.parametrize decorator. The test code which uses these input arguments can have 2^n (n = 2) input combinations i.e x = 5/y = 7, x = 6/y = 7, x = 5/y = 8, x = 6/y = 8.

When you are doing cross browser testing for your web product, there would be requirements where tests have to be performed on combinations of web browsers & web URLs. This is where parametrize stacking can be handy where parameterization in pytest can be done for web browsers & test URLs.

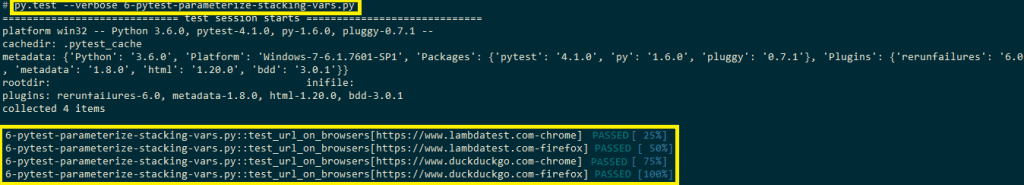

As shown in the example below, browsers (Chrome & Firefox) and input URL (https://www.lambdatest.com and https://www.duckduckgo) are stacked in parametrize decorators. Hence, four test cases are generated, and each browser is tested against two input URLs.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 |

# Import the 'modules' that are required for execution import pytest import pytest_html from selenium import webdriver from selenium.webdriver.chrome.options import Options from selenium.webdriver.common.keys import Keys from time import sleep global url_under_test # We make use of the parametrize decorator to supply input arguments # to the test function @pytest.mark.parametrize("input_browser", ['chrome', 'firefox']) @pytest.mark.parametrize("input_url", ['https://www.lambdatest.com', 'https://www.duckduckgo.com']) def test_url_on_browsers(input_browser, input_url): if input_browser == "chrome": web_driver = webdriver.Chrome() if input_browser == "firefox": web_driver = webdriver.Firefox() web_driver.maximize_window() web_driver.get(input_url) print(web_driver.title) sleep(5) web_driver.close() |

As seen in the output screenshot below, the stacked parametrize decorators generate four test cases.

pytest_generate_tests – Define custom parameterization

In this Selenium Python tutorial, we will now look into the aspect of custom parameterization in pytest. pytest_generate_tests is a useful hook through which you can parametrize tests and implement a custom parameterization scheme in pytest. Arbitrary parameterization using pytest_generate_tests is done during the collection phase. pytest_generate_tests is a function and not a fixture. The input argument to the pytest_generate_tests is a metafunc object.

metafunc objects help to inspect a test function and generate tests according to the test configuration (or values) specified in the class or module where a test function is defined. metafunc argument to pytest_generate_tests gives some useful information about the test function:

- A look at the name of the function.

- A look at fixture names that the function requests.

- Flexibility to see the code of the function.

The implementation of metafunc class can be found here. Below is the example code which demonstrates the usage of pytest_generate_tests function for parameterization in pytest.

|

1 2 3 4 5 6 7 8 9 10 11 12 |

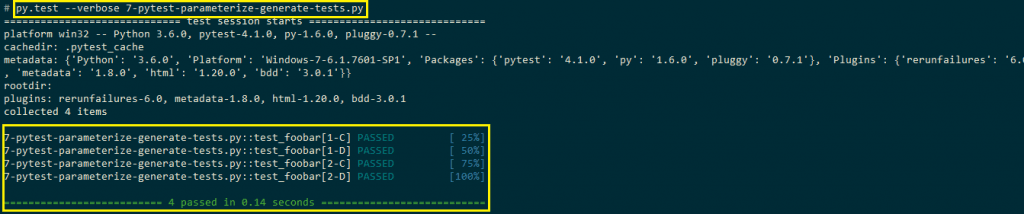

import pytest def pytest_generate_tests(metafunc): if "fix1" in metafunc.fixturenames: metafunc.parametrize("fix1", ["1", "2"]) if "fix2" in metafunc.fixturenames: metafunc.parametrize("fix2", ["C", "D"]) def test_foobar(fix1, fix2): # Compare the data type of the fixtures # Assert is not raised if all the fixture parameters are of String type assert type(fix1) == type(fix2) |

As seen in the implementation, parameterization is done twice (i.e., for fix1 and fix2). Shown below is the output screenshot where the code executes for 4 combinations (1-C, 1-D, 2-C, 2-D). If the data types of fix1 and fix2 do not match, an assert is thrown.

In case you want to know more about assertions in pytest you can watch the video below.

We port the previous cross browser testing example by accommodating the changes required for pytest_generate_tests.

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 |

# Import the 'modules' that are required for execution import pytest import pytest_html from selenium import webdriver from selenium.webdriver.chrome.options import Options from selenium.webdriver.common.keys import Keys from time import sleep # @pytest.mark.parametrize("input_browser", ['chrome', 'firefox']) # @pytest.mark.parametrize("input_url", ['https://www.lambdatest.com', 'https://www.duckduckgo.com']) def pytest_generate_tests(metafunc): if "input_browser" in metafunc.fixturenames: metafunc.parametrize("input_browser", ["chrome", "firefox"]) if "input_url" in metafunc.fixturenames: metafunc.parametrize("input_url", ["https://www.lambdatest.com", "https://www.duckduckgo.com"]) def test_url_on_browsers(input_browser, input_url): if input_browser == "chrome": web_driver = webdriver.Chrome() if input_browser == "firefox": web_driver = webdriver.Firefox() web_driver.maximize_window() web_driver.get(input_url) print(web_driver.title) sleep(5) web_driver.close() |

The only change we have done is the addition of pytest_generate_tests with parameterization done for browser and test URL.

|

1 2 3 4 5 |

def pytest_generate_tests(metafunc): if "input_browser" in metafunc.fixturenames: metafunc.parametrize("input_browser", ["chrome", "firefox"]) if "input_url" in metafunc.fixturenames: metafunc.parametrize("input_url", ["https://www.lambdatest.com", "https://www.duckduckgo.com"]) |

This PyTest Tutorial for beginners and professionals will help you learn how to use PyTest framework with Selenium and Python for performing Selenium automation testing.

Parameterization for cross browser automation testing

We have covered a couple of examples where parameterization in pytest was used for cross browser testing. One roadblock you would encounter with local Selenium Grid for cross browser testing is heavy investment in building an infrastructure for testing across different browsers, devices, and operating systems.

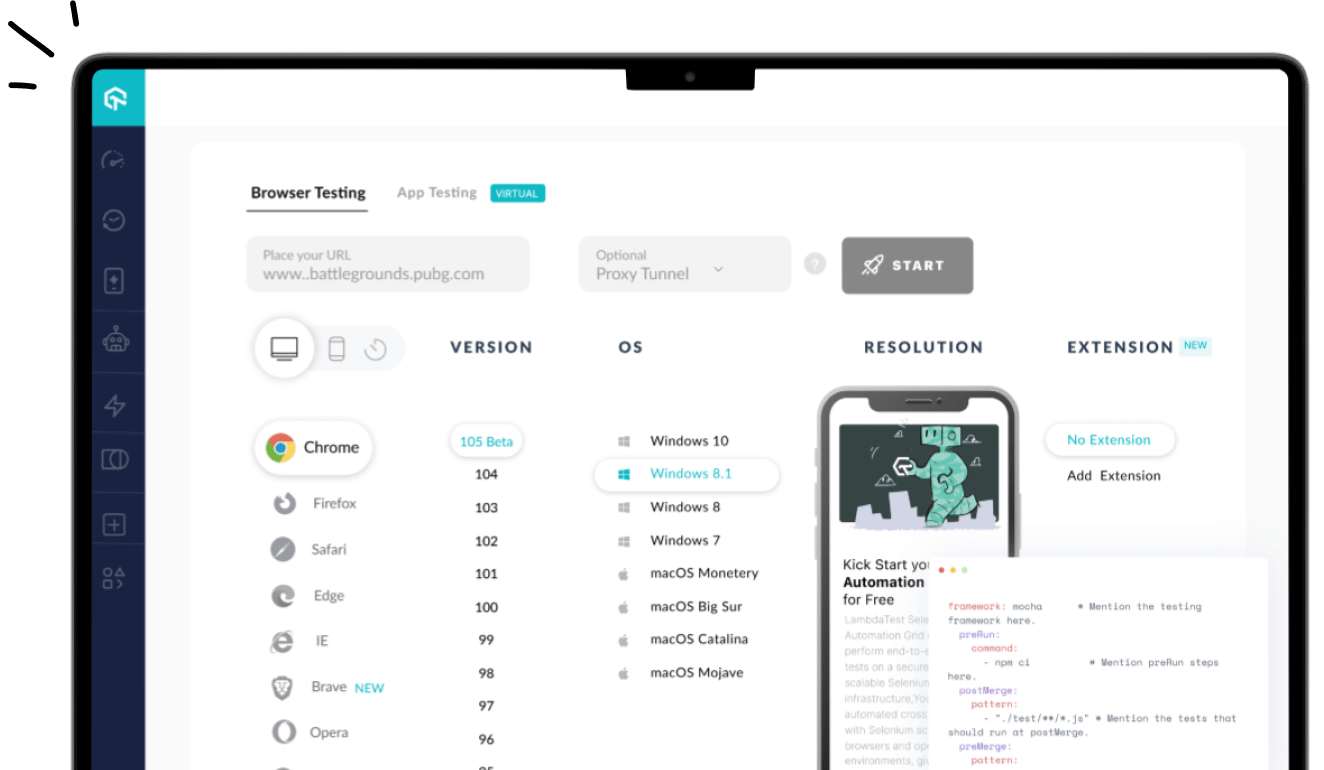

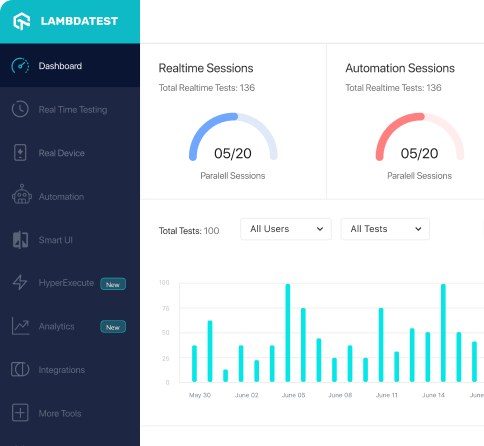

This is where cross browser testing on the cloud can be more effective since you need not worry about setting up the infrastructure required for test automation. Using LambdaTest, you can test your code on 3000+ Real Browsers and Operating Systems online. Porting the Python code to the LambdaTest platform requires minimal effort.

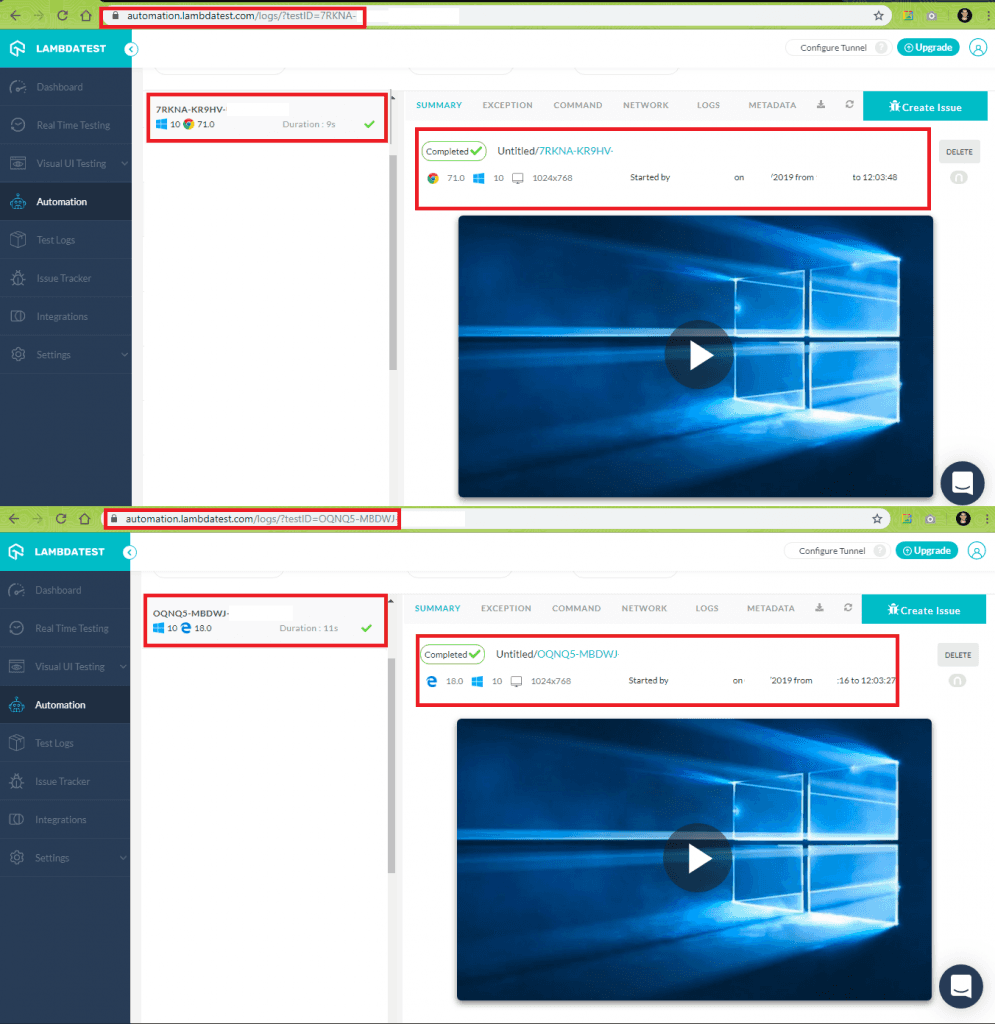

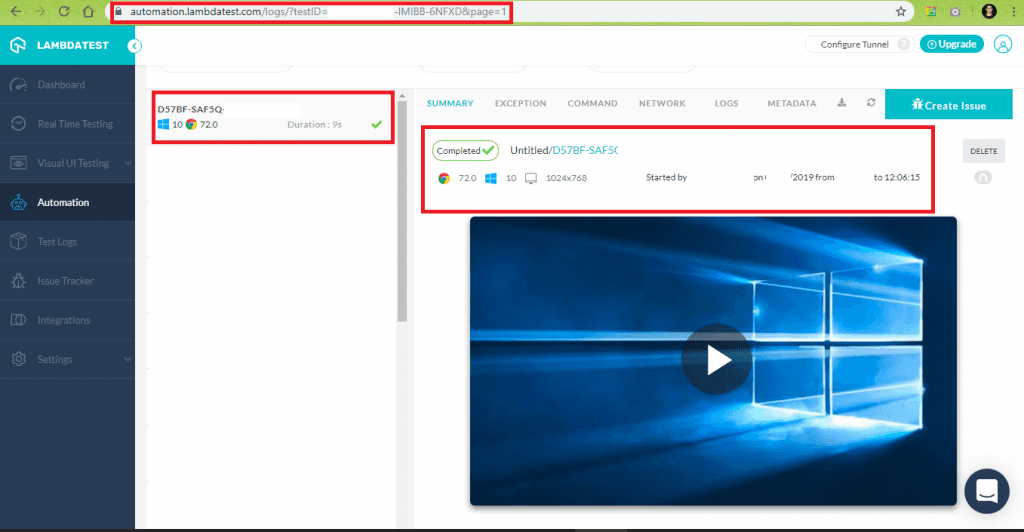

To get started with test automation with pytest and Selenium WebDriver, you need to create an account on LambdaTest. Once the account is created, do make a note of the user-name and access-token from the profile page on LambdaTest. Browser capabilities for the browser under test can be set using the LambdaTest capabilities generator. You can keep track of all the automation tests by visiting https://automation.lambdatest.com/. Each test will have a test-id and build-id associated with it, the format is https://automation.lambdatest.com/logs/?testID=<test-id>&build=<build-id>

To demonstrate the usage of parameterization and the LambdaTest platform, we have come up with a simple problem statement – Open DuckDuckGo search engine on browsers (Microsoft Edge, Chrome, and Firefox) and perform a search for “LambdaTest.” For modularity, we make use of user-defined command-line arguments so that testing can be performed by supplying required arguments (browser, browser version, and operating system) on the terminal.

conftest.py

To get started, we create a confest.py that contains the implementation of fixture functions that need to be shared between different files. parser.addoption function is used with different command-line options.

Command-line options for our test are below:

Can be Firefox, Microsoft Edge, Internet Explorer, etc. |

|

If the command line argument for –browser is “all,” a predefined set of cross browser tests are executed, else the command line arguments are parsed, stored & passed to the respective variables via parser.addoption().

|

1 2 3 4 5 6 7 8 |

def pytest_generate_tests(metafunc): "test generator function to run tests across different parameters" if 'browser' in metafunc.fixturenames: if metafunc.config.getoption("-B") == "all": # Parameters are passed according to the one generated by # https://www.lambdatest.com/capabilities-generator/ metafunc.parametrize("platform,browser,browser_version", cross_browser_configuration.LT_cross_browser_config) |

The code snippet of the function used to take command-line options is below:

|

1 2 3 4 5 6 7 |

def pytest_addoption(parser): parser.addoption("-P","--platform", dest="platform", action="store", help="OS on which testing is performed: Windows 10.0, macOS Sierra, etc.", default="") .......................... |

Each command-line option also has a fixture function associated with it. In our case, since there are three command-line options, we have three different fixture functions, i.e., one for each command-line argument. Code snippet is below:

|

1 2 3 4 5 |

@pytest.fixture def platform(request): "fixture for platform - can be Windows 10.0, OSX Sierra, etc." "Equivalent entry for 'platform' : platform-name" return request.config.getoption("-P") |

conftest.py [Complete Implementation]

cross_browser_configuration.py

If the command-line arguments that are passed during testing are –B “all” or –browser ”all”, a predefined configuration (consisting of browser, browser versions, and operating systems [platforms]) is used for testing. The arguments used are in accordance with the capabilities generated by LambdaTest capabilities generator. Shown below are the capabilities generated for Microsoft Edge browser (version 18.0) for Windows 10 platform.

|

1 2 3 4 5 6 |

capabilities = { .......................... "platform" : "Windows 10", "browserName" : "MicrosoftEdge", "version" : "18.0" } |

In-line with the cross browser testing requirements & syntax used by LambdaTest capabilities generator, arrays for each of these are defined in the code

|

1 2 3 4 5 |

platform_list = ["Windows 10"] browsers = ["MicrosoftEdge", "Chrome"] chrome_test_versions = ["71.0"] .......................... .......................... |

In the function generate_LT_configuration(), we iterate through the browser list, e.gMicrosoft Edge, Chrome, etc., and generate different test combinations based on the browser versions & operating system on which tests have to be carried out. Some of the test combinations that are generated are below:

It is important to note that generate_LT_configuration() will only be invoked if the command-line parameter is –B “all” or –browser ”all”

cross_browser_configuration.py [Complete Implementation]

This certification is for professionals looking to develop advanced, hands-on expertise in Selenium automation testing with Python and take their career to the next level.

Here’s a short glimpse of the Selenium Python 101 certification from LambdaTest:

test_exec.py

Remote Selenium WebDriver setup for the respective web browsers is done in configure_webdriver_interface(). The remote URL which is assigned to command_executor [in webdriver.Remote()] uses the combination of user-name and access-token for user authentication. You can find those details on profile page on LambdaTest.

|

1 |

remote_url = "http://" + user_name + ":" + app_key + "@hub.lambdatest.com/wd/hub" |

Browser, browser version, and platform are assigned to the respective fields to form desired_capabilities [in webdriver.Remote()].

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 |

# Configuration of Selenium WebDriver def webdriver_interface_conf(platform,browser,browser_version): urllib3.disable_warnings(urllib3.exceptions.InsecureRequestWarning) user_name = "user-name" app_key = "app-key" ............................................. if browser == 'chrome' or browser == 'Chrome': desired_capabilities = DesiredCapabilities.CHROME desired_capabilities['browserName'] = browser desired_capabilities['platform'] = platform desired_capabilities['version'] = browser_version ............................................. remote_url = "http://" + user_name + ":" + app_key + "@hub.lambdatest.com/wd/hub" return webdriver.Remote(command_executor=remote_url, desired_capabilities=desired_capabilities) |

Since we are using the pytest framework for testing, the test name should start with test_. Here, we carry out only one test – test_send_keys_browser_combs() which demonstrates usage of ActionChains.

test_exec.py [Complete Implementation]

Execution

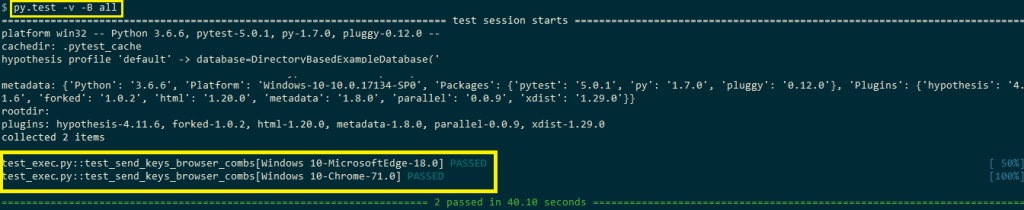

Now that the implementation is complete, we trigger the cross browser tests on LambdaTest using predefined combinations (browser, browser version, and platform) as well as combinations specified through command-line arguments

Pre-defined combinations

This is triggered using the following command

|

1 |

py.test --browser "all" |

You can find the status of the test in the Automation tab on the LambdaTest website. You should switch to ‘Test View’ as we have not specified the build details in the capabilities. The test view is accessible at https://automation.lambdatest.com/timeline/?viewType=test&page=1 Shown below is the output snapshot and execution snapshot on LambdaTest.

User-defined combinations

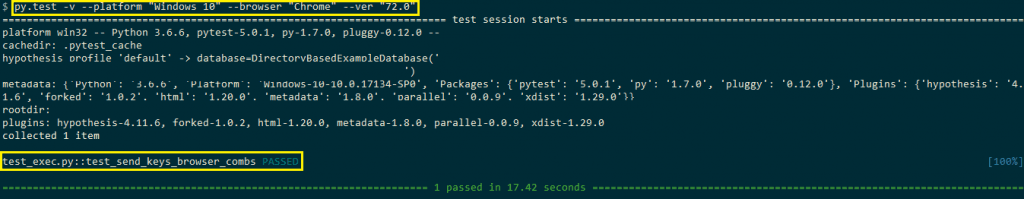

This is triggered using the following command

|

1 2 3 |

py.test -v --platform <platform-name> --browser <browser-name> --ver <browser-version> py.test -v --platform "Windows 10" --browser "Chrome" --ver "72.0" |

The browser on which testing is performed is Chrome (version 72.0), and the platform is Windows 10. Shown below is the output snapshot and execution snapshot on LambdaTest.

PyTest Tutorial – Python Selenium Test in Parallel

Conclusion

In this Selenium Python tutorial, we saw how parameterization in pytest can be useful to test multiple scenarios by changing only the input values. Rather than supplying the test data manually; pytest.fixture, @pytest.mark.parametrize decorator and pytest_generate_tests() can be used for parameterization in pytest. Cross browser testing on the cloud, e.g, LambdaTest, can be used with pytest and Selenium in order to accelerate testing and build scalable test cases.

Frequently Asked Questions

What is a fixture in Pytest?

A fixture is a function that is automatically run before, after, or around each test function. Fixtures are used to feed some data to the tests such as database connections, URLs to test and some sort of input data. A fixture function defined inside a test file has a local scope only within the test file.

What is yield in Pytest?

The yield keyword is used in Python to produce multiple values. The return statement can also return multiple values.

What is Pytest Mark Parametrize?

It allows you to test your application with a single invocation of the pytest command.

What is parameterization in pytest?

Parameterization in pytest is a feature that allows developers to run a test function multiple times with different arguments. The pytest.mark.parametrize decorator is used to specify the arguments and values to be used in the test function.

What is the difference between pytest fixtures and parametrize?

Pytest fixtures are used to set up a fixed baseline on which tests can reliably and repeatedly execute. They provide a consistent context for tests. On the other hand, pytest.mark.parametrize is used to run the same test function with different values, thereby enhancing test coverage with a variety of test data.

How does pytest execute a parameterized test?

Pytest executes a parameterized test by iterating over the input values provided in the pytest.mark.parametrize decorator. For each set of values, it runs the test function, treating each run as a separate test case.

What is parametrize in Python?

Parametrize in Python, especially in the context of testing frameworks like pytest, is a technique that allows developers to run a single test function with different sets of input values and configurations, effectively creating multiple distinct test cases from one base test function.

Got Questions? Drop them on LambdaTest Community. Visit now